FOLLOW-ME

A human-machine interface

for unmanned ground vehicles

With Follow-Me, unmanned ground vehicles (UGVs) become true support agents on human-robot teams

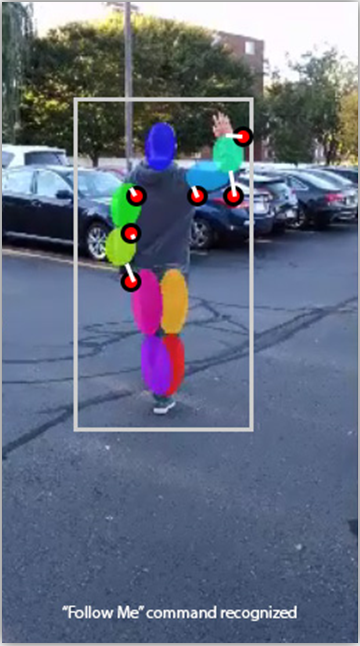

The Follow-Me human-machine interface lets a UGV accompany a human operator, like a fellow squad member, through challenging outdoor environments. It responds to speech and gesture commands, providing verbal and non-verbal feedback, maintaining formation, and avoiding obstacles along the way.

The interface works with onboard autonomy software so the UGV can act both as a scout and robotic mule, carrying cumbersome equipment and eliminating the need for remote control piloting. The system also provides speech-based natural language processing, enabling hands-free control and feedback.

“The first easy-to-use and easy-to-understand autonomous ground systems that can earn the trust of human operators will mark a turning point in the adoption and deployment of UGVs as true support agents within human-robot teams.”

Camille Monnier,

Principal Scientist

Features

- Hands-Free Following – A mobile robot can follow a leader on its own, without the need for a remote control.

- Gesture-Based Controls – The robot can be controlled using natural hand gestures, such as raising or lowering a hand, or pointing in the direction the robot should go.

- Voice Commands – The robot can be controlled using simple verbal commands, such as follow me, back off, come closer, or drive two meters to your 6 o’clock.

- Obstacle Avoidance – The robot automatically avoids obstacles and hazards during navigation.

- Easy Integration – Follow-Me integrates with existing commercial, off-the-shelf computing and sensing hardware or as a standalone hardware product. It also integrates with ROS-enabled platforms.

Contact us to learn more about Follow-Me and our other robotics and autonomy capabilities.

The Follow-Me technology was originally developed under the MINOTAUR effort for the US Army.

MINOTAUR was featured in an Aerospace and Defense Technology issue on Unmanned Systems. Read more in the article, Developing a Multi-Modal Robot Control Interface.