CAMEL

Advancing the emerging field of explainable AI (XAI)

You’re a small-unit leader defending a strategic fortified position from an approaching enemy. You don’t have the manpower to do it on your own, but an unmanned ground vehicle (UGV) could provide the logistics and reconnaissance support you need. The UGV is controlled by an artificial intelligence (AI) that performs effectively on average, but it can make inexplicable errors that lead to catastrophic results. Without knowing when and whether to trust this potential teammate, you can’t take the risk of relying on it.

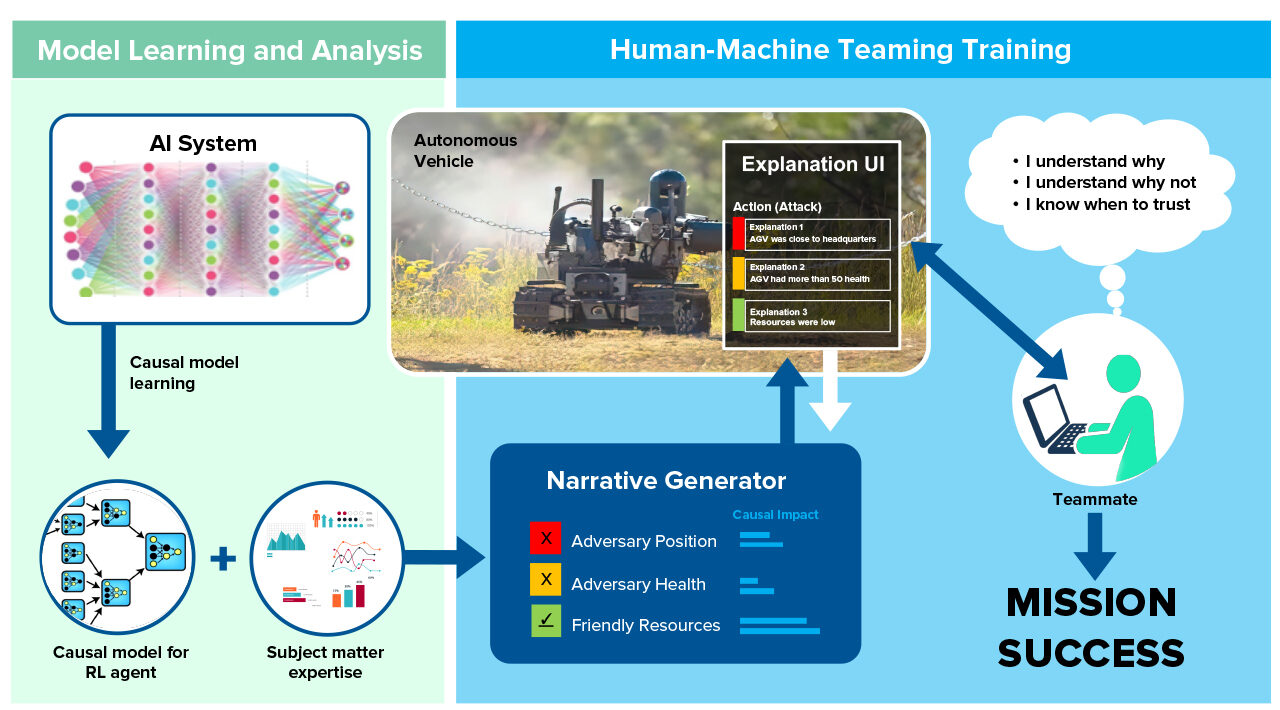

CAMEL (Causal Models to Explain Learning) aims to change this scenario by providing accurate, understandable explanations of AI system decisions. For example, CAMEL can explain how an AI performs classification, such as detecting pedestrians in images, or autonomous decision-making, such as in game environments.

The Need for Explainability

True autonomy requires trust

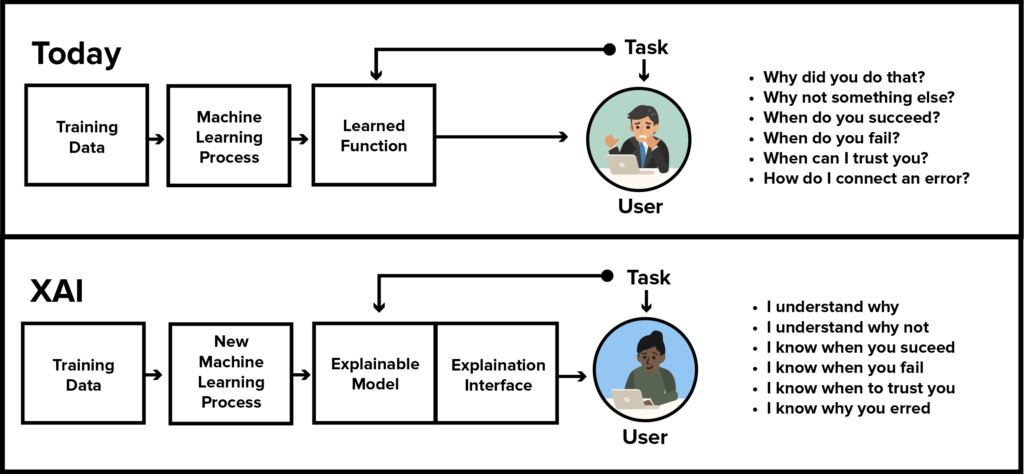

Human-machine teaming is vital to the future of AI. In AI systems based on deep reinforcement learning (RL), the RL agent’s decision-making process is “black box.” As a result, some users try to explain system outcomes with incorrect “folk theories,” which can lead to dangerous outcomes.

The Defense Advanced Research Project Agency (DARPA) is funding multiple efforts to make AI more explainable by combining machine learning techniques with human-computer interaction design.

The CAMEL Framework

Explanations based on causal models

CAMEL is a novel framework that explains deep RL techniques for data analysis and autonomous systems. It unifies causal modeling with probabilistic programming. Causal models describe how one part of a system influences other parts of the system. The models help describe the real driving forces of system behavior.

CAMEL creates counterfactuals—explanations of the agent’s behavior when it’s not what the user expected. For example, a user asks, “Why did the agent attack?” and CAMEL’s Explanation User Interface answers, “If the health of enemy-294 had been 50% higher, the agent would have retreated.”

When developing CAMEL, Charles River Analytics led a team that included Brown University, the University of Massachusetts at Amherst, and Roth Cognitive Engineering. The four-year contract with DARPA was valued at close to $8 million. The project drew on Charles River’s expertise in machine learning and human factors.

Results

CAMEL approach leads to enhanced user trust

The Charles River project team demonstrated that the CAMEL approach led to enhanced user trust and system acceptance when classifying images of pedestrians. Then, the team took on a more exciting challenge—training RL agents that teamed with humans to play the real-time computer strategy game StarCraft II.

An evaluation of CAMEL supported all major hypotheses, showing that participants:

- Gave high ratings to CAMEL explanations, finding them usable, useful, and understandable

- Formed a more accurate mental model of the AI agent

- Showed improved use of the AI agent, following agent recommendations in situations where the agent performs well, but not in situations where it performs poorly

- Showed improved task performance, achieving higher scores in StarCraft II

The Explanation User Interface (enlarged, left) displays the AI’s level of confidence, the actions taken, and the variables considered.

CAMEL and the Future

Using AI in new and challenging domains

CAMEL is poised to address explainability and human-machine teaming in new and challenging domains, such as Intelligence, Surveillance, and Reconnaissance (ISR) and Command and Control (C2). For decision-makers faced with life-or-death situations, CAMEL’s explanations are vital to effective interpretation and application of recommendations from AI systems. CAMEL will affect the way that AI systems are deployed, operated, and used inside and outside the Department of Defense.

CAMEL is a cornerstone of Charles River’s emerging leadership in XAI. The project contributed to fundamental understanding of challenges in the field and identified directions for future work: Brittle AI, Causal Confusion, and Bad Mental Models: Challenges and Successes in the XAI Program. Other projects in this domain include ALPACA and DISCERN.

Contact us to learn more about CAMEL and our other XAI capabilities.

This research was developed with funding from the Defense Advanced Research Projects Agency (DARPA). The views, opinions and/or findings expressed are those of the author and should not be interpreted as representing the official views or policies of the Department of Defense or the U.S. Government.