Charles River Analytics Inc., developer of intelligent systems solutions, is building a maritime defense tool for the Office of Naval Research (ONR). Under its Topside Optical Processing for Global Unmanned Navy (TOPGUN) effort, Charles River is extending detection and classification capabilities of an autonomous surface vessel. The eighteen-month program is valued at $1,600,000.

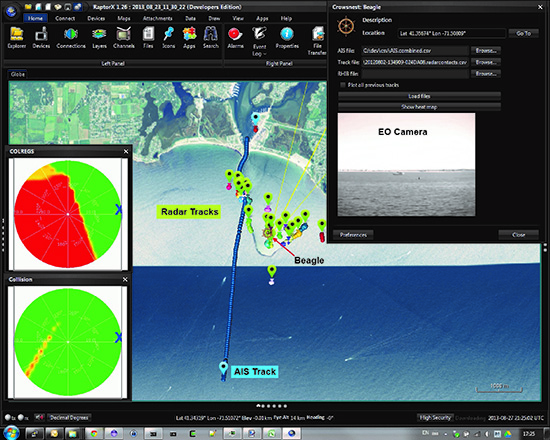

One critical function to test is COLREGS compliance, which requires perception algorithms that can reliably detect and classify ships to support safe navigation. Under Charles River’s CROWSNEST effort, a data-driven Object Detection Framework (ODF) was used for fast and reliable ship detection. Convolutional neural networks were then applied to robustly classify ships into the appropriate COLREGS classes based on their appearance.

Charles River is performing the TOPGUN effort to continue this critical autonomy development, extending detection and classification capabilities with efficient deep learning algorithms to detect more distant targets, recognize a wider range of ship types, and demonstrate performance on the water. TOPGUN software will be hardened to meet information assurance requirements and tested at sea.

“We’re excited about the TOPGUN effort because it allows us to refine our already impressive CROWSNEST ship detection and classification results and demonstrate these capabilities on a truly innovative platform,” said Ross Eaton, Principal Scientist at Charles River Analytics. “This demonstration will improve safety and reliability by supporting small craft detection and COLREGS compliance, necessary steps on the path to enabling the full range of autonomous capabilities. Because TOPGUN is camera-agnostic, we can apply this same technology to new programs and new platforms, providing autonomous ship detection and classification in timeframes that are unmatched.”

“TOPGUN is just one of many ongoing Charles River efforts across the unmanned systems domain where we bring extensive experience and capabilities to the challenges of deploying truly autonomous robotic platforms. We apply our state-of-the-art sensor processing and AI technologies to on-board autonomy that enables unmanned systems to ‘see,’ understand, and respond independently to the operating environment,” commented Rich Wronski, Vice President of Charles River’s Sensing, Perception, and Applied Robotics division.

This material is based upon work supported by the Office of Naval Research under Contract No. N68335-17-C-0154. Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the Office of Naval Research.