Charles River Analytics received more than $9M from the US Department of Defense to develop modeling tools for more fail-proof artificial intelligence (AI) systems.

As AI systems are increasingly called into service, there’s a greater need for human-machine interfaces (HMI) that keep the operator in the loop. With these interfaces, if an AI model is not robust enough to support an appropriate response, a human has enough situational awareness (SA) of the process to take control and prevent catastrophic failure.

However, if engineers do not address these SA demands early in the model development process, gaps in systems surface too late, when the AI product is already on the front lines. To address this issue, the Defense Advanced Research Projects Agency (DARPA) created the Enhancing Design for Graceful Extensibility (EDGE) program, which aims to incorporate HMI-based principles early in the design process.

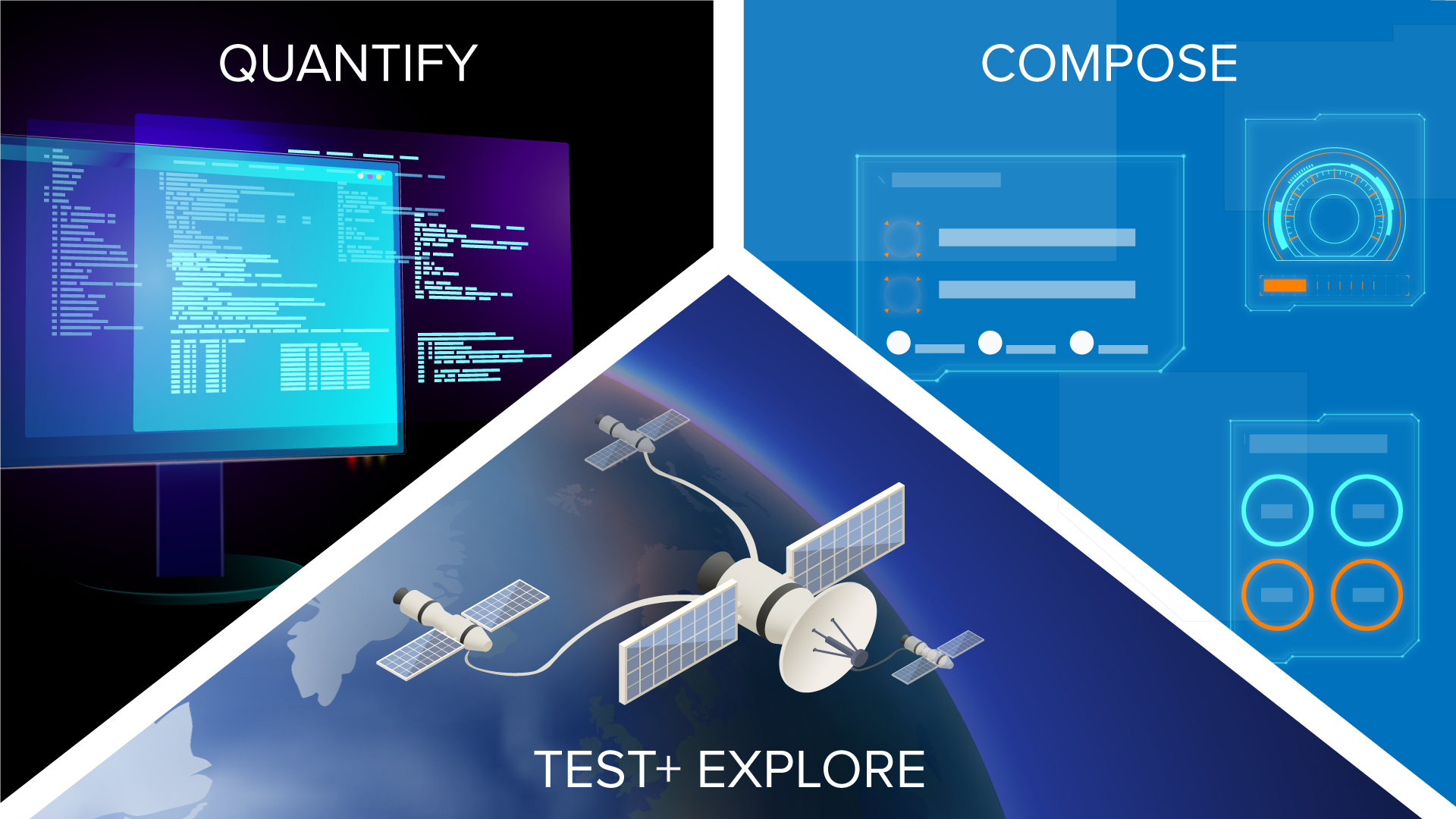

Charles River Analytics is developing PERCEPTS (Probabilistic Engine for Representing and Computing Enhanced Presentation Techniques for SA) for the air and space domains in response to the EDGE program.

Approaches that use traditional model-based engineering designs often fail to address HMI issues, explains Sean Guarino, Principal Scientist at Charles River Analytics and PERCEPTS project manager. “We iterate on ideas very quickly and at a potentially abstract level. But there aren’t any tools to do that from an HMI perspective,” Guarino says. PERCEPTS addresses that gap. “The goal of the program is to bring [HMI-based] modeling early into the systems design process, before a model is built, to enable earlier detection of SA gaps and change the design to meet SA needs.”

PERCEPTS uses probabilistic programming—specifically, the outcomes from modeling of if-then scenarios—to rate if (and how well) early-stage HMI designs meet a set of SA factors. The net design score can also rate the SA capabilities of individual components that make up the design.

PERCEPTS then uses probabilistic models to suggest changes to the design—adding or taking away system components—in an iterative loop that can improve the design’s score. The goal is to automate creation so that even those with limited expertise can build reliable, SA-aware AI systems, and to make HMI a priority early in the design process.

The potential commercial applications are wide-ranging, Guarino noted. “This kind of early design iteration with HMI design would be incredibly useful wherever large-scale systems are being built— organizations that work in system design, for example, or those building large aircraft,” Guarino says. “And it can revolutionize the way we do HMI design right now.”

Contact us to learn more about PERCEPTS and our capabilities in HMI-centric design.

This material is based upon work supported by the Defense Advanced Research Projects Agency (DARPA) Defense Sciences Office (DSO) and the Naval Information Warfare Systems Command (NIWC) under Contract No. N65236-22-C-8011. Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the DARPA or NIWC Atlantic.